I clearly remember the first day I bought my iPad 2. On April 29th, 2011, I got the iPad and from then on, every single day, I thought how I can develop software with it. Though iPad is a very capable machine and strengthens itself with each generation, it is not possible to use it as a full featured development machine. In this post, I would like to share my experience about developing simple Java code with iPad.

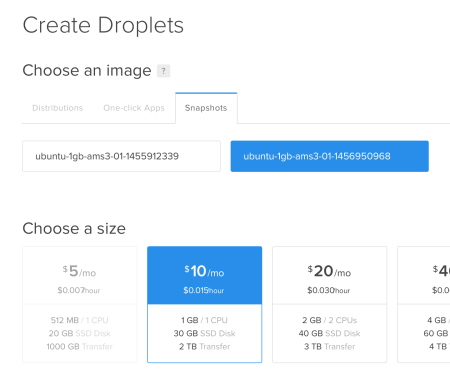

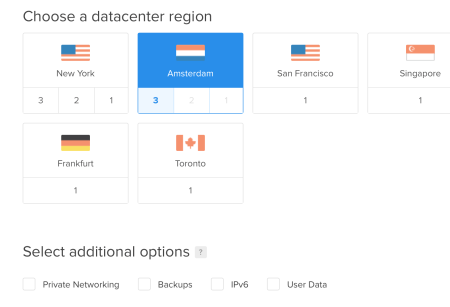

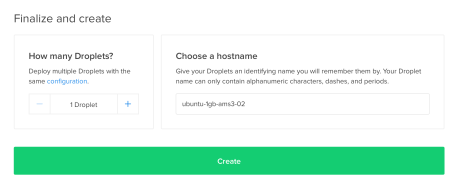

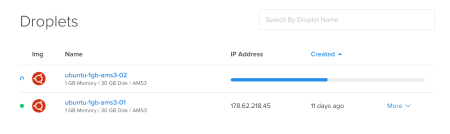

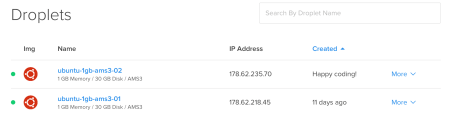

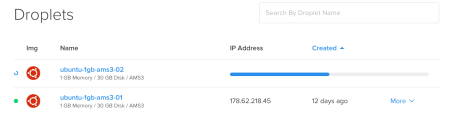

The code editing is done on iPad. The Java compiling is on DigitalOcean VPS. To me, it is much more simpler and pleasant to use text editing iOS apps than using vim or other stuff on VPS. I also add GitHub flavor for preserving, sharing and version controlling of the source codes. Either, a git client can run on iPad or command line interface can be used through VPS. I explained both of them.

The iOS Apps

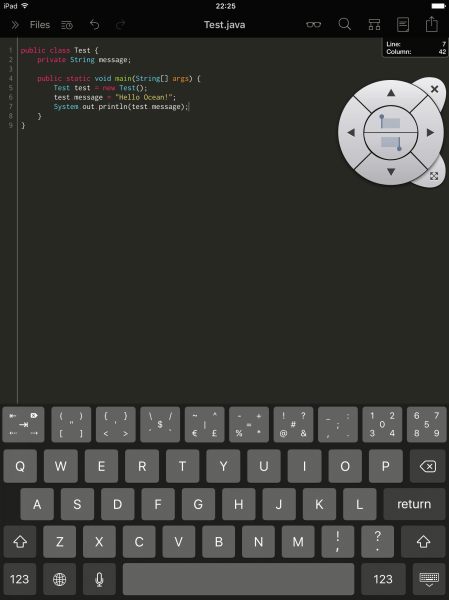

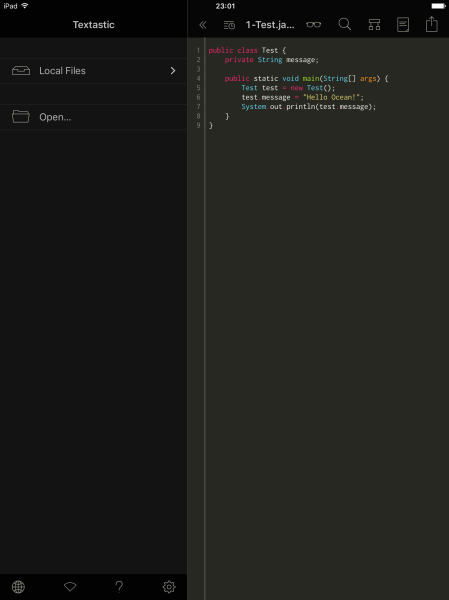

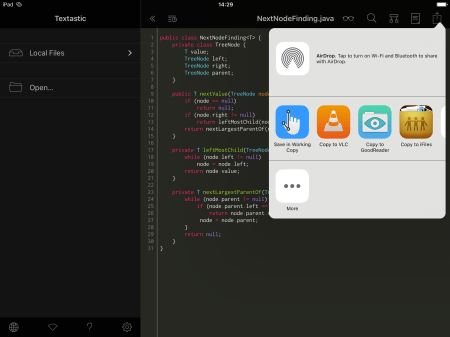

I preferred to use Textastic for editing of source codes. It is one of the best productivity tools developed for iPad. The additional key row is priceless especially for coders. It can also make SFTP connections. Combining these two features in itself made my choice really straightforward. You can see the simple Test.class file below. The selection disk on the upper right corner simplifies the multiple line selection tremendously.

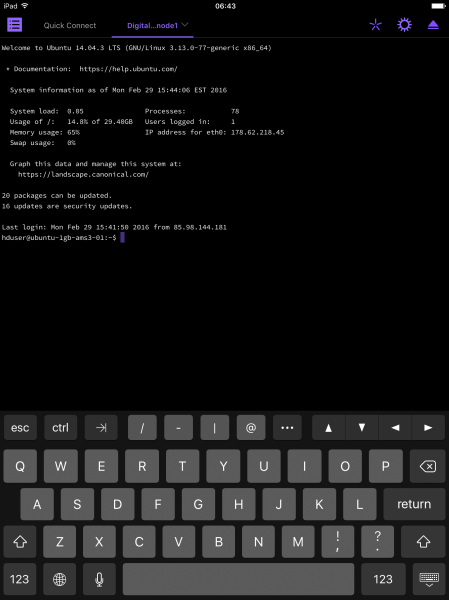

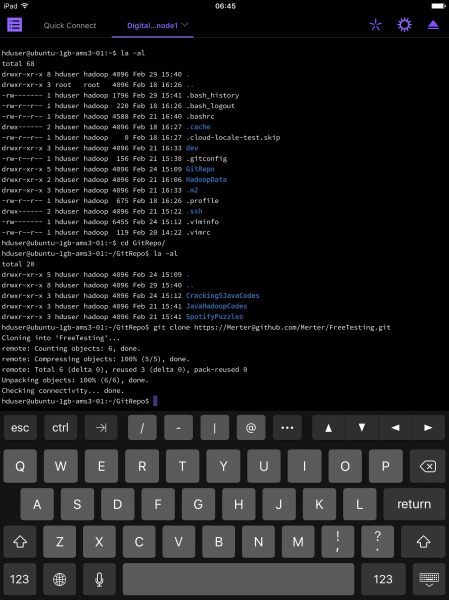

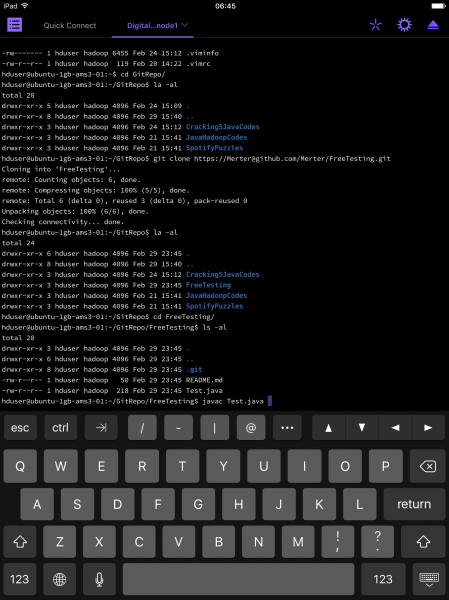

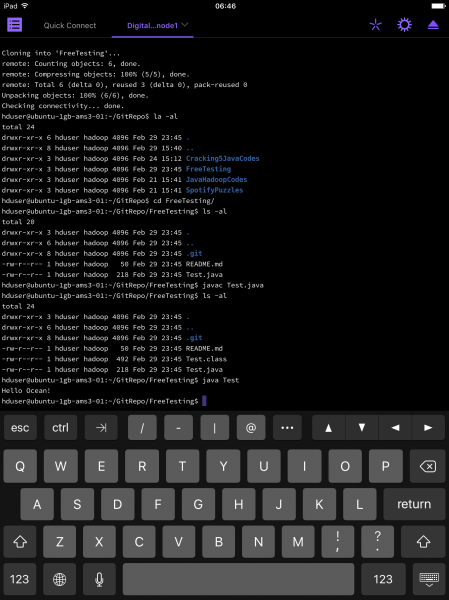

We need to connect to our DigitalOcean server over ssh. For that purpose, I chose Prompt 2. Its clean interface drew my attention. Also, typing on the iPad keyboard is very responsive. There are only 4 active extra keys over the keyboard. You can change that with other predefined sets but this is the most used ones for my case. Tab key is vital and saves much time.

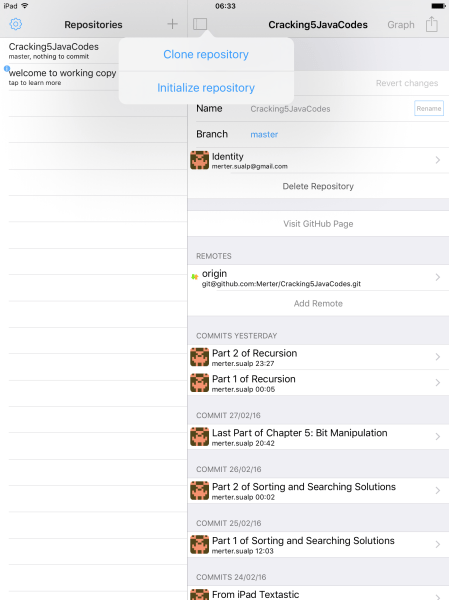

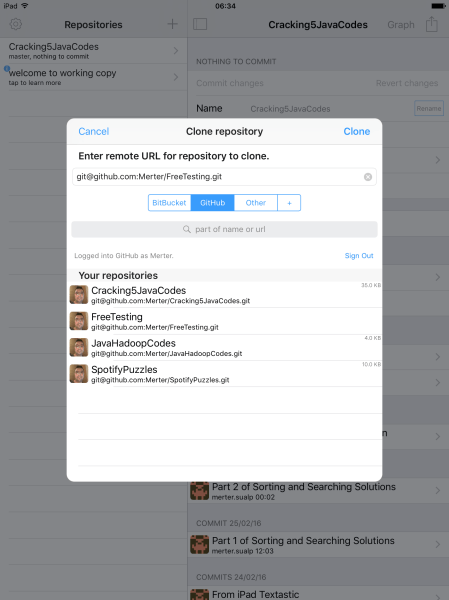

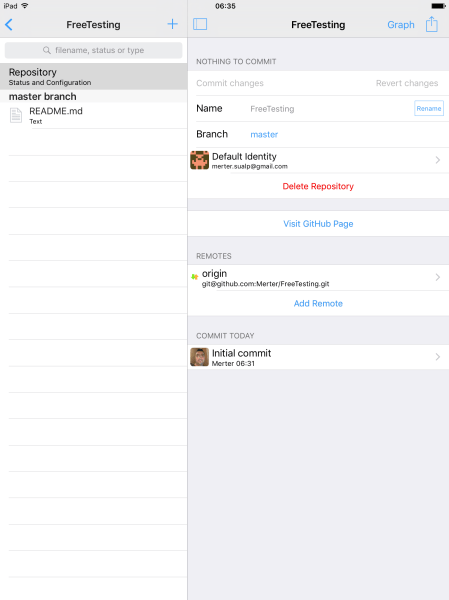

These two apps are our main tools. Optionally, as a git client, we can use Working Copy Enterprise app. It has a free version but it cannot push your commits so it is pretty much useless for our purposes. I recommend spending the extra money and get the full features. I will cover the use case for it later in this post.

Now, we have two paths to walk. The first one is, editing the source codes, sending them to VPS, compiling on VPS and committing / pushing the modifications to github by means on command line. The second path starts as the first one, but after successful compile, we do not use the command line. Rather, we depend on Working Copy as our git client.

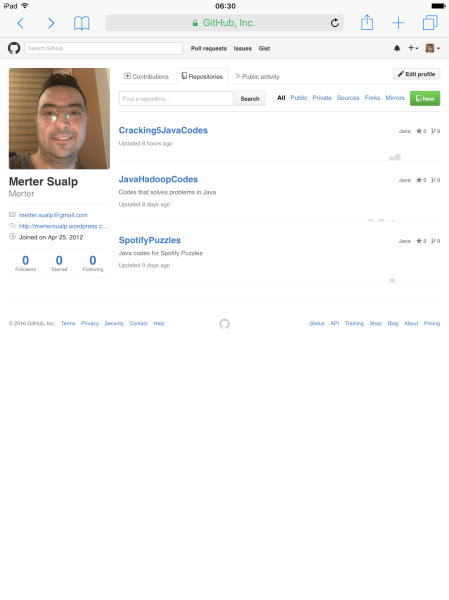

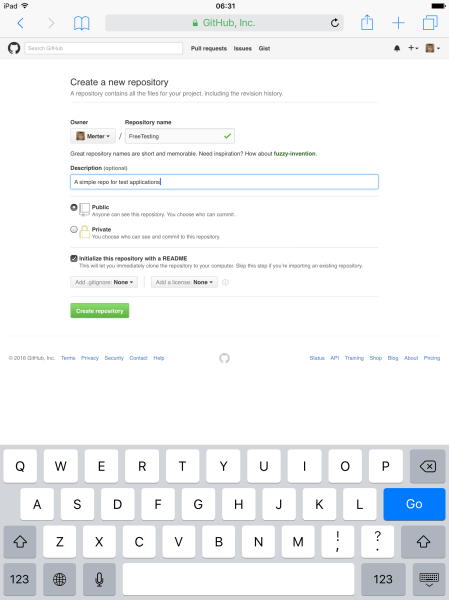

First, let’s create a git repository on GitHub.

From Safari on iPad, I logged into my account and created a FreeTesting repository.

The Direct Way to GitHub

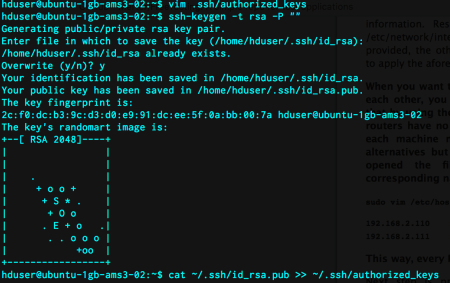

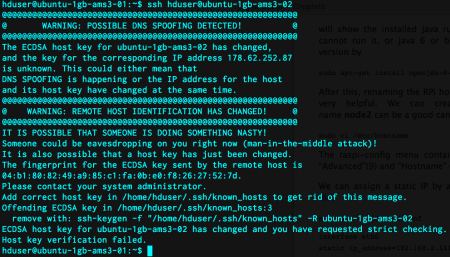

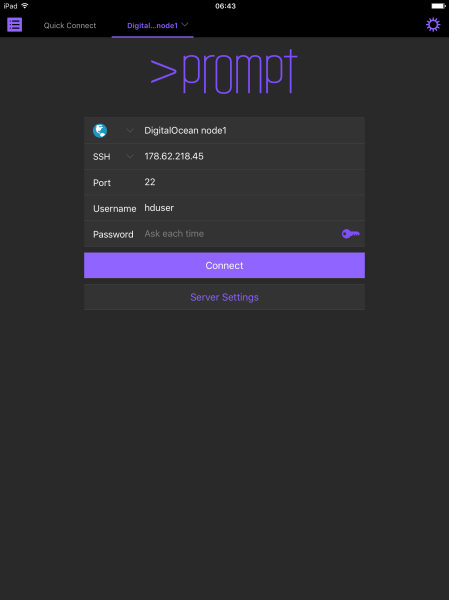

We need to setup Prompt 2 for logging into our VPS. No matter what the path is, it is our main compiler platform.

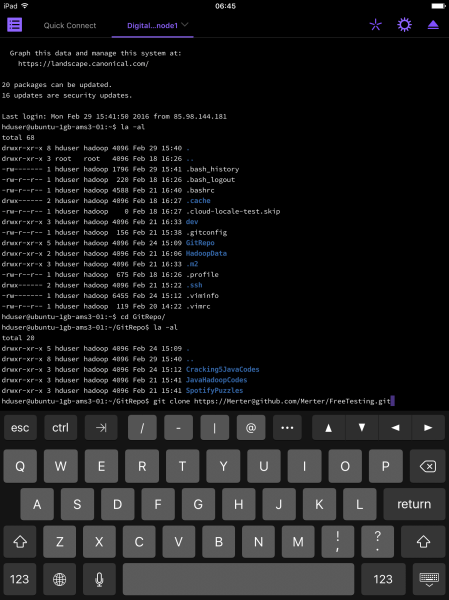

After logging in, we are welcomed by the command line. We clone our brand new repository to a VPS folder using the command:

git clone https://Merter@github.com/Merter/FreeTesting.git

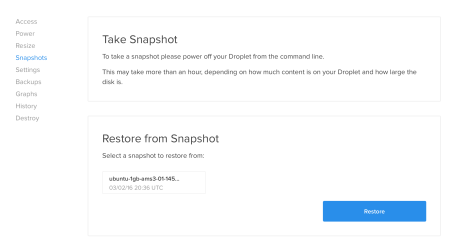

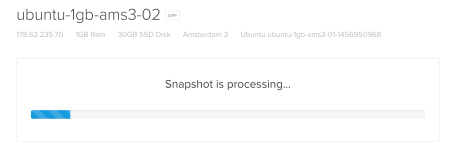

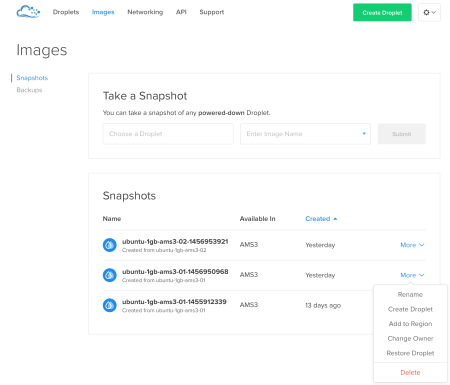

So, DigitalOcean VPS knows our github repository, but the editor does not. We will create our first Java source code file and make the necessary connection between the Textastic editor and VPS.

We create a new local file in Textastic.

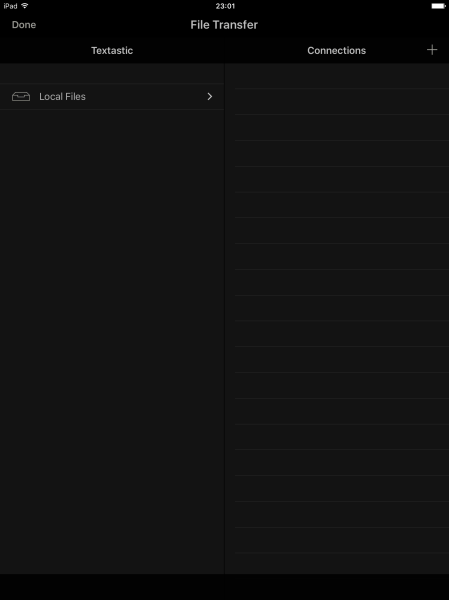

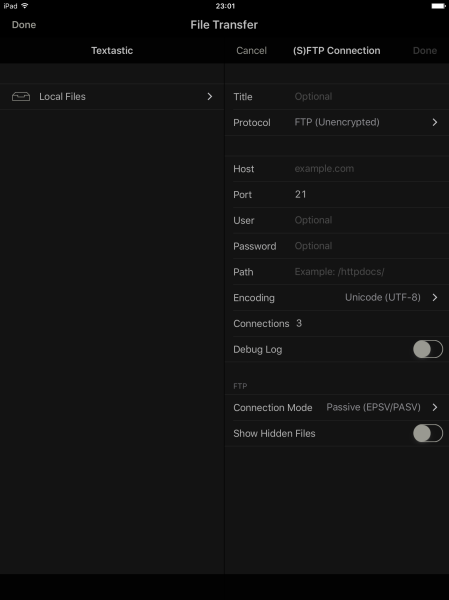

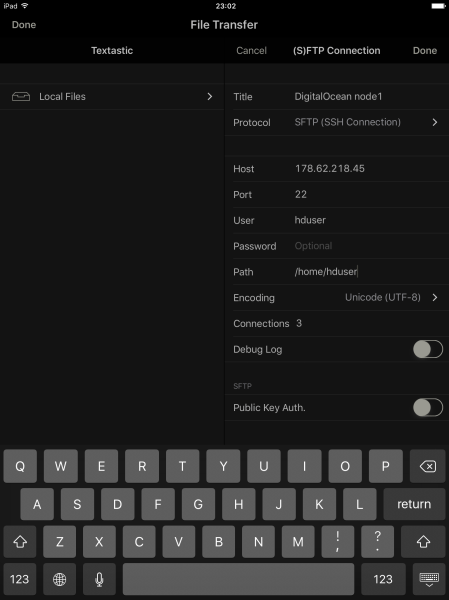

We need to connect this file to our remote server. The File Transfer section shows the local files and remote connections side by side.

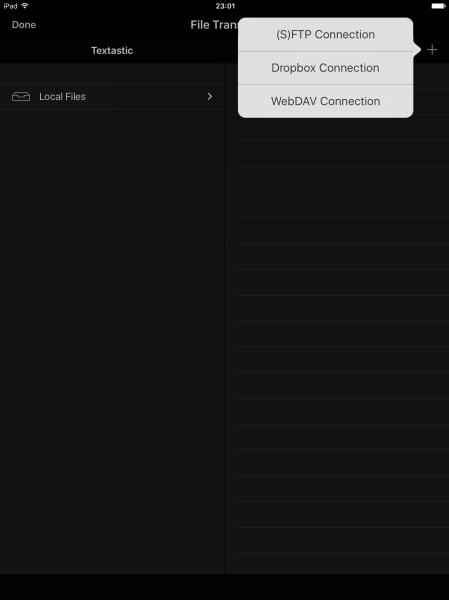

We are adding an (S)FTP Connection. This is where our VPS is.

Just complete the necessary information to successfully login to the remote server.

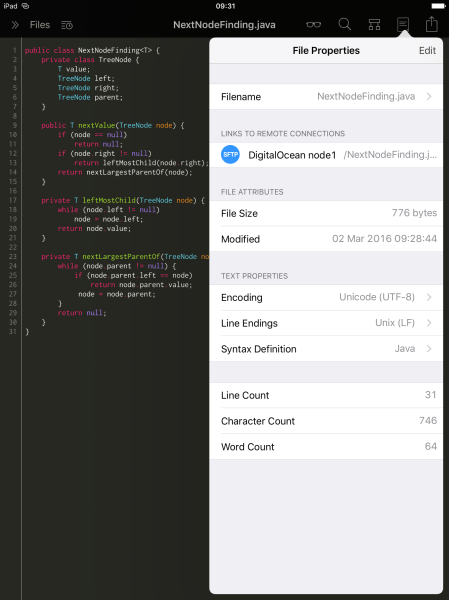

When we upload the file, it becomes connected to the respective remote location. Here, we see the basic file attributes and text information.

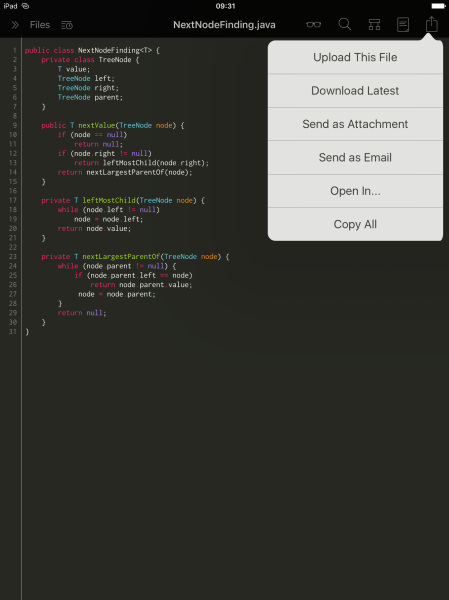

After the connection is complete, we can either upload our version edited in Textastic or update the file and overwrite our changes by downloading the latest version of the file.

We have the file on the VPS. We can compile and run to see what the results are. If anything goes wrong or the code has compile time errors or the code produces unexpected results, we can edit the code in the same way and update the file on VPS.

We will send our modifications to GitHub with the command line from VPS. First, we add the modified files. Later, we will commit them and eventually push the changes to GitHub.

git status git add Test.java git commit -m "Hello World" git push origin master

The Working Copy Way

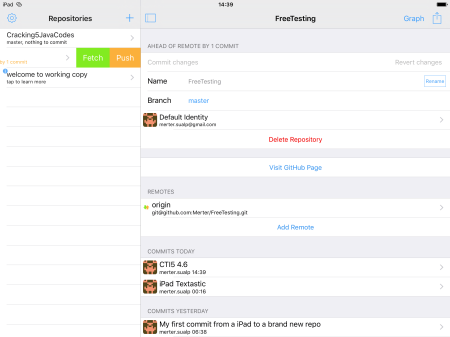

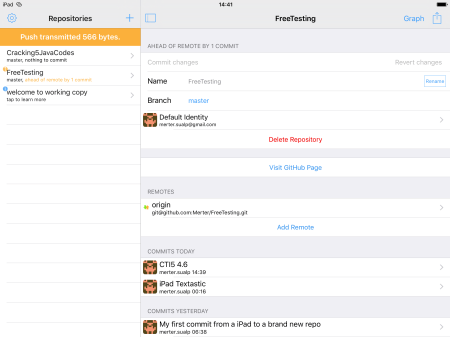

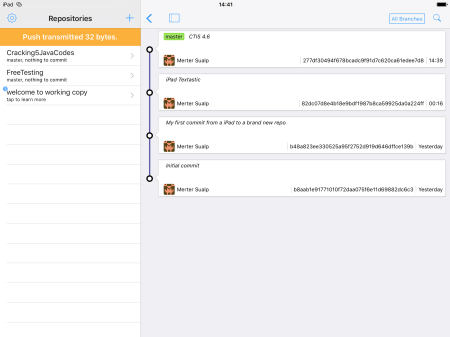

The other way to achieve the same result is that, after the successful compilation, we do not use the command line to store our files in GitHub. The Working Copy app will be our git client so we will do the save (add), commit and push operations with it. The beauty of that app is that, we can see the branches, commits and their respective time and message information with a nice graphical representation. Moreover, we should have the advantage of touch input as much as possible with an iPad.

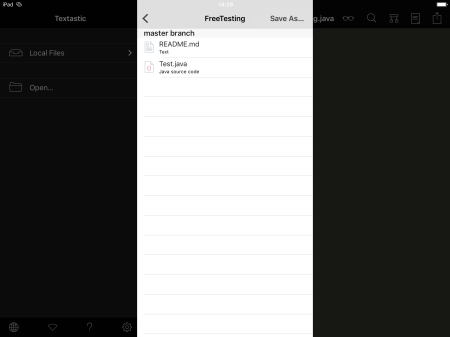

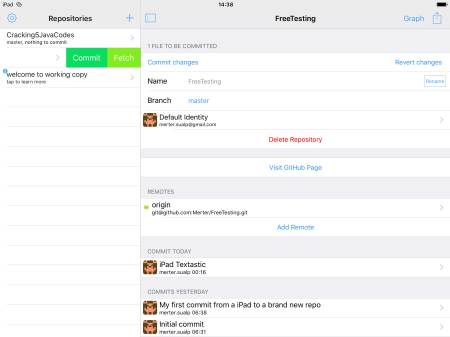

The first thing we will do with Working Copy is to clone the repository we have created.

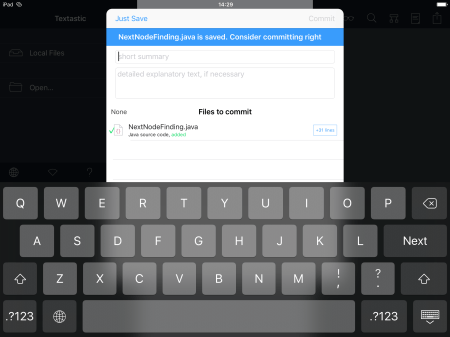

After getting the repository, we turn back to Textastic again, since our source code is still there. We save that file to the respective repository in Working Copy.

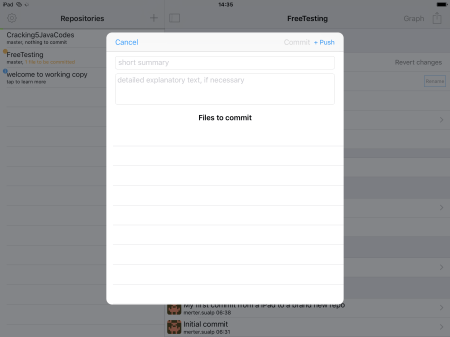

We only saved the file but we can also commit it with a message at the same time.

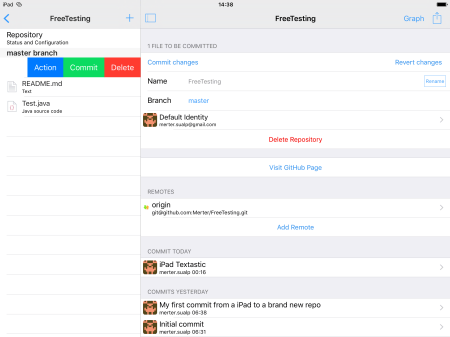

We have the option to commit the files later. There are two ways to do that. Either we commit the all saved changes in the repository…

… or we can commit the individual file.

Either way, we can also push them while committing.

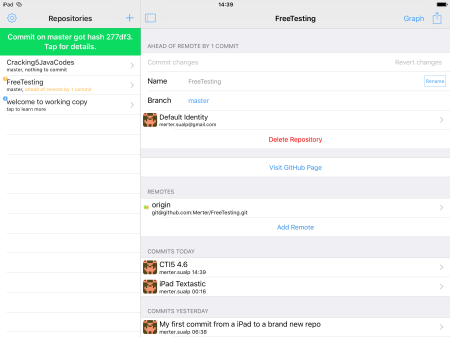

I preferred to push later. We can only push the branch of a repository.

We can see the overall situation and history of the repository with the following graph.

It was a pleasant and flawless flow to work on an iPad. I did not expect to do all these on an iPad mini but it was very nice to accomplish this feat.

Hope you also enjoy as I do. Happy coding…