Whenever I use a computer having an operating system other than a variant of Linux, I almost always install a virtual Ubuntu system on it. My single preference in the world of Windows is VirtualBox. In early days of my Mac history, I paid for Parallels and VMware Fusion but at the end settled with VirtualBox.

The beauty of virtual systems is many. You can share your resources with your host operating system without crippling or accidentally damaging it. You have the freedom to play with different configurations, tools and deployments. You can get snapshots and return to them if something goes wrong. The whole virtual disk is a folder that you can easily transfer from machine to machine.

On the downsides, they are limited to your host resources. Especially RAM is the most critical resource. Although Linux is stingy on it, you have to spare a few GBs of your RAM. For me, in today’s standards, the least amount of RAM to be used in two different operating systems at the same time is 8GB.

While experimenting with my RPi cluster and Hadoop, I developed the codes on a virtual Ubuntu 14.04 LTS. But for a long time, I was thinking about setting up a dedicated system to connect remotely by ssh and transferring my development duties there. This way, I would have the option of using even an iPhone or an iPad since there are many ssh apps on the store.

When I read an article about it on Marco Arment’s this post, and listened to these two episode of his Under the Radar podcast, I decided to give it a try and dive into the DigitalOcean. I created my very first Droplet.

We can control most of the droplets’ settings with an iOS app. I use DigitalOcean Manager by Philip Heinser.

I opened up my Hadoop installation post and installed Java 7. Later, I installed Hadoop 2.7.2. There is nothing to be changed from the installation of version 2.7.1, other than the name of the machine. node1 is replaced with ubuntu-1gb-ams3-01, as it can be seen above.

After the installation, I run the WordCount test for smallfile.txt and mediumfile.txt. It took 10 and 30 seconds respectively. That is better than my virtual Ubuntu! I am really impressed.

As far as I know, we pay for the droplet even if it is powered off. That is because DigitalOcean keeps our data, CPU and IP for us. To avoid that payment, we have an option but I do not recommend that. Here is how and here is why.

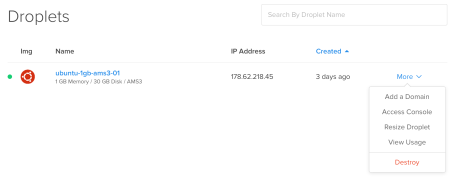

Either with iOS app or within the DigitalOcean web site, first, we power our droplet off. That is mandatory for the second step. Then, we take a snapshot of the droplet. After that, we destroy the droplet. This does NOT destroy the snapshot. That’s the critical part. We can create a droplet from that snapshot whenever we want. Until that moment, DigitalOcean does not charge for that droplet anymore.

So why do I not recommend that way? I tried this and experienced that the root password changes. That’s not a problem for my Hadoop experiments. But IP also changes. That is a problem. We cannot start hdfs without making necessary changes. The commands that I executed are as follows:

rm ~/.ssh/authorized_keys ssh-keygen -t rsa -P "" cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys ssh-keygen -f "/home/hduser/.ssh/known_hosts" -R localhost ssh-keygen -f "/home/hduser/.ssh/known_hosts" -R 0.0.0.0

Leave a comment