Before writing our first codes, we need to prepare our development environment. We will use Java as our programming language and also code unit tests. To be as simple and generic as possible, I prefered to use vim as my editor and command line as my compiling tool. Since we are using Java and do not depend on any IDE, we are to square away our own classpath. That is the critical step I will explain in here.

To compile our Java Hadoop codes, we will add HADOOP_CLASSPATH environment varible to our .bashrc file as:

export HADOOP_CLASSPATH=$($HADOOP_INSTALL/bin/hadoop classpath)

I develop the Hadoop applications in a different machine than the RPis. We do not have to but I recommand this approach. It has a few pros and almost none cons.

- The separation of development environment from the production is necessary. We can break anything at anytime in development. It shouldn’t interfere with RPi cluster.

- Since development environment is fragile, I definitely encourage you to use virtual Linux on Mac. My setup includes Ubuntu 14.04 LTS running on a Mac host with VirtualBox. You can backup and restore easily.

- I installed a single node Hadoop on virtual Linux. My HADOOP_INSTALLATION variable is /usr/local/hadoop. My java files are also reside there.

The jar files inside HADOOP_CLASSPATH are enough to compile Java codes. I prefered to create a folder named /usr/local/hadoop/my_jar_analysis and put all my compiled .class files under that.

javac -classpath ${HADOOP_CLASSPATH} -d /usr/local/hadoop/my_jam_analysis \

/usr/local/hadoop/*.java

Although this very simple setup is enough to compile Hadoop codes written in Java, it is far from complete to successfully compile the Hadoop unit tests.

Apache MRUnit is designed to produce mapreduce unit tests. You can download the source codes and binaries from here. I downloaded the zip file containing the binaries and extracted it. Then, copied all the contents to my virtual Linux.

mkdir /home/hduser/dev mkdir/home/hduser/dev/apache-mrunit-1.1.0-hadoop2-bin cp -a /media/sf_Downloads/apache-mrunit-1.1.0-hadoop2-bin/. \ /home/hduser/dev/apache-mrunit-1.1.0-hadoop2-bin/ cd /home/hduser/dev/apache-mrunit-1.1.0-hadoop2-bin/

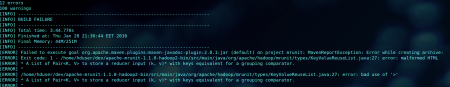

mvn package -Dhadoop.version=2

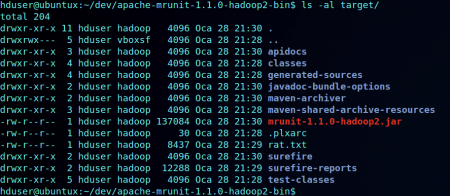

Despite the error, mrunit-1.1.0-hadoop2.jar file is generated under the folder /home/hduser/dev/apache-mrunit-1.1.0-hadoop2-bin/target/

- Hadoop classpath

- lib jars for mrunit

- Our .class files

In an ideal world, we can combine them separated with column (:) and our classpath should be ready. However, the problem I encountered here is that there are some jars occuring different directories but having the same .class files. For example, I found the MockSettingsImpl.class both in a jar under Hadoop classpath and in another jar under lib of mrunit. The Java, unintentionally, states that it cannot find that class. In fact, it cannot identify it UNIQUELY. What I did is to mimic the exclusion syntax of dependency resolution tools. It is not elegant in any way, but it does its job pretty well. Here is the environment variable setting in .bashrc:

export HADOOP_JAVA_PATH=$(echo $HADOOP_CLASSPATH | \ sed 's@\/usr\/local\/hadoop\/share\/hadoop\/common\/lib\/\*@'"$(readlink -f /usr/local/hadoop/share/hadoop/common/lib/* \ | grep -v mockito | sed -e ':a' -e 'N' -e '$!ba' -e 's/\n/:/g')"'@g'):/home/hduser/dev/apache-mrunit-1.1.0-hadoop2-bin/lib/*:/home/hduser/dev/apache-mrunit-1.1.0-hadoop2-bin/target/mrunit-1.1.0-hadoop2.jar:/usr/local/hadoop/my_jam_analysis/

I should divide it into meaningful chunks and explain them briefly.

readlink -f /usr/local/hadoop/share/hadoop/common/lib/*

We are getting all the files under the defined folder with their full paths.

readlink -f /usr/local/hadoop/share/hadoop/common/lib/* | grep -v mockito

We just remove the ones containing mockito in their names. This part is crucial. The exclusion mechanism actually occures in here.

readlink -f /usr/local/hadoop/share/hadoop/common/lib/* | grep -v mockito \

| sed -e ':a' -e 'N' -e '$!ba' -e 's/\n/:/g'

After removing the conflicting jar, we concatenate the paths of other jars under that folder, putting a column (:) between each of them.

$(echo $HADOOP_CLASSPATH | sed \ 's@\/usr\/local\/hadoop\/share\/hadoop\/common\/lib\/\*@'"$(readlink -f /usr/local/hadoop/share/hadoop/common/lib/* \ | grep -v mockito | sed -e ':a' -e 'N' -e '$!ba' -e 's/\n/:/g')"'@g')

Before adding the other jars to the classpath, we replace the containing folder inside the HADOOP_CLASSPATH with individual jars we constructed just before. As the last step, we add the other jar locations.

With only the HADOOP_JAVA_PATH, we are able to compile and run our unit tests and Hadoop codes. In the next post, I will show how.

Leave a comment